Subscribed

[redacted] enthusiast, robot combat enjoyer, distressingly Appalachian, father of ninjas

Subscribed

knock knock

Wallet Inspector!

Solid call to action

Hey, we hailed our @self!

deleted by creator

Magnificent

Apologies for missing it first time around!

Nifty, thanks!

@dgerard hey, I saw your bsky post and had an idea. Have you weighed in on Nscale and the UK’s sovereign scafolding reserve? I hope that it gets noticed by the wider public, because that shit is 10/10 hilarious.

It gets better! According to Trashfuture, Nscale never even bothered to buy the scaffolding yard, which is still in operation.

https://trashfuturepodcast.podbean.com/e/unlocked-scaffold-to-heaven/

Is this Yuri’s Revenge?

Ars sure drank the koolaid didn’t they?

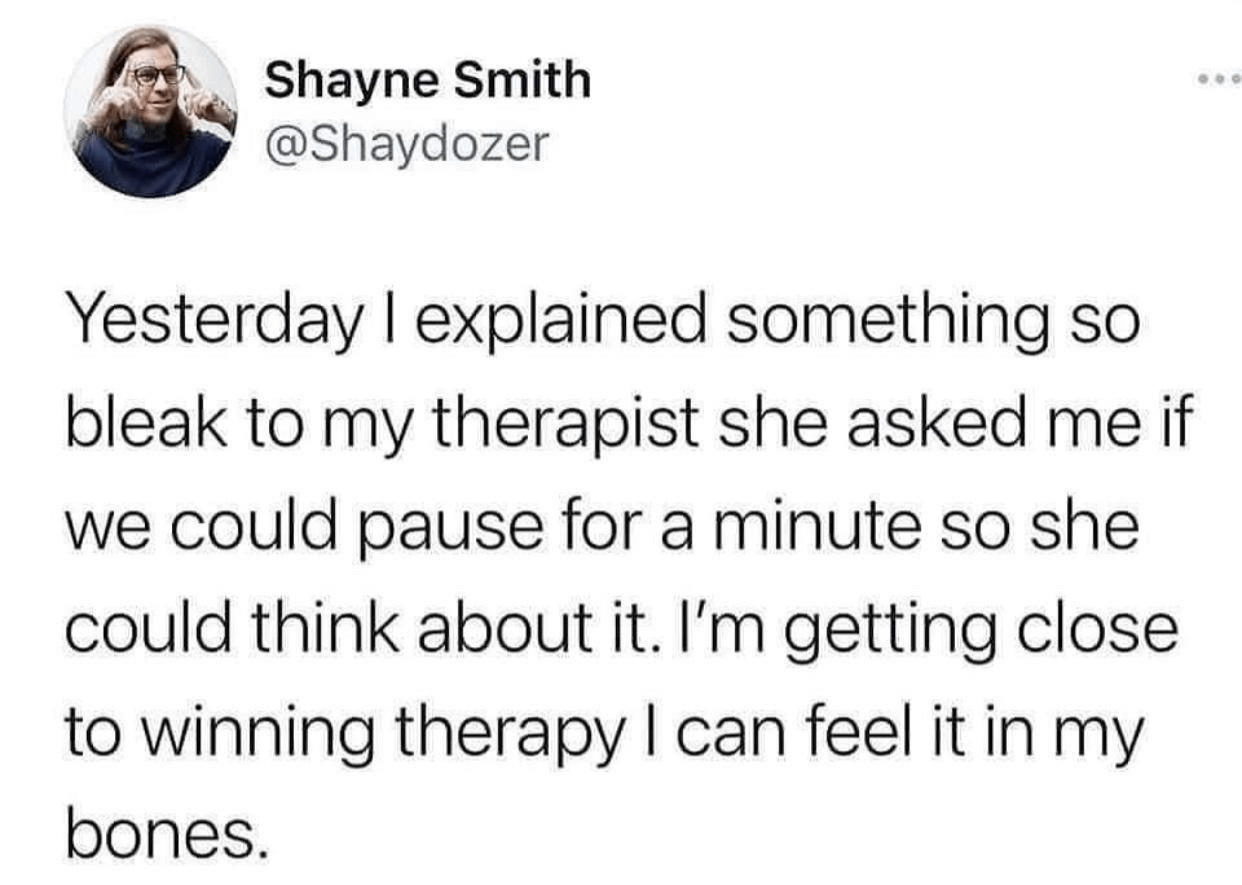

Yesterday i explained something so bleak to my therapist she asked me if we could pause for a minute so she could think about it. I’m getting close to winning therapy i can feel it in my bones.

A lateral move from pointing at the unbelievers and screeching, really

Hey, he’s posted here before!

That story is rad as hell. I was ready to run through a wall for those folks at the end. Appreciate you, Robert!

It sucks. :(

Honestly, the article reminds me of Scott Alexander, but succinct. “Here are several true things and an absolutely batshit wrong thing, presented together with equal earnestness.”

The wrong thing being “Believing that LLMs are trash is a mental disorder (not really but wink wink).”

Why do this now, when it’s all coming apart? It’s baffling.

A hackernews notices that HN autoflags 404 Media articles. A little downthread, dang bullshits unconvincingly in reponse.

This really seems to be the case.

Wew, Cory Doctorow sure is posting through it

https://pluralistic.net/2026/03/12/normal-technology/#bubble-exceptionalism

Labor theory of value, but worse

Delve removed from YCombinator

https://news.ycombinator.com/item?id=47634690

IIUC, it looks like Delve lied to YC about stealing another company’s Apache 2.0 licensed slopware. This is appatently a bigger sin than selling a product that does fuck-all. I guess they weren’t tall enough for this ride.

Delve claims to offer “Compliance as a Service”

https://delve.co/ (absolutely unhinged)

A link to the expose that precipitated the divorce:

https://deepdelver.substack.com/p/delve-fake-compliance-as-a-service