the hallucinations will continue until morale improves

All year long, from January to Decuary.

The full ten months of the year?

lmao

Thanks, magical mushroom man

In addition to pushing something before it’s ready and where it’s not welcome, Apple’s own stinginess completely screwed them over.

What do LLMs need to be smart? RAM, both for their weights and holding real data to reference. What has apple relentlessly price gouged and skimped on for years? Yeah, I’ll give you one guess…

For 15 years, Apple has always lagged behind Android on implementing new features, preferring to wait until they felt their implementations were ready for mainstream consumption and it’s always worked out for them. They should have stuck to that instead of jumping on the AI bandwagon with a half baked technology that most people don’t want or need.

Unfortunately, AI is moving at such a pace that this IS the usual Apple delayed-follow. They had to feed the public hype for something like 9 months. And it doesn’t seem like a true fix for hallucination is coming, so they made their choice to move ahead. Frankly I blame Wall Street because at this point they will eviscerate anyone who doesn’t have a demonstrated AI plan and shipped products around it. If anyone is at the core of this craze, it’s investors, because they are still in the “we don’t know how big this thing is going to get” phase with AI. We’re all dealing with the consequences.

Interestingly though, I’m reminded of the early days of the Internet. People did raise the flag that the Internet wouldn’t have the same reliability as traditional media, because anyone could post anything. And that’s remained true. We have mass disinformation campaigns galore, and also specific incidents of false viral stories like “the Pope has died” which are much like this case, just driven by malicious humans instead of hallucinating software.

It makes me wonder if the problems with AI will never be truly solved but we will just digest AI and learn to live with it as we have with the internet in general. There is also a comparison in my mind between AI and self-driving cars, because every time one of those has a big fuck up we all shout and point and cry that the tech will never be trustable, meanwhile human drivers are out there killing by the hundreds of thousands annually and we don’t even blink at that anymore.

the problems with (the current forms of generative) AI will not be solved, because they cannot be solved. They are intrinsic to the whole framework.

Error correction is also intrinsic to all of computing and telecommunications, though. That’s a loose comparison but I hope we can make progress on this and get it to a manageable state, even if zero is impossible in principle. A lot of things in life only asymptotically approach zero and yet we live.

This is not error correction issue though. Error correction means taking known data and adding redundancy to it so that damaed pieces can be repaired. This makes the message longer.

An llm’s output does not contain error correction. It’s just the output. And it doesn’t contain any errors, mathematically speaking. The hallucination is the correct output. It is what the statistics it gathered from its training set determined is most likely. A “correct” llm output is indistinguishable from a “hallucination”, mathematically, and always will be. A hallucination is simply “some output that some human, somewhere, doesn’t like”, and that’s uncomputable. And outputs that people subjectively consider as “hallucinations” cannot be eliminated, because an llm is, fundamentally, a probabilistic algorithm. If you added error correction to an llm’s output all you’d be able to recover is the llm’s original output, “hallucinations” and all.

Tldr: “hallucinations” are a subjective thing. A Hallucination" is not an error that can be corrected after-the-fact, because it is not an error in the first place.

If anyone says “What if we make an AI which specifically catches these hallucinations and then-” I will personally take a flight and come to your house and slap you.

all the advertised AI detection tools are just that. Happy slapping!

Well the problems to be solved aren’t necessarily the technical ones. Another way of “solving” the problems is to stop trying to use it in contexts where it’s limitations are more trouble than they are worth.

Here it is being tasked with and falling to accurately summarize news, which is ridiculous because those news articles come with summaries already, headlines.

So a fix may not mean fixing the summary, but just skipping the attempt as superfluous.

There are uses for the state of LLMs as they are, but hard to appreciate when it’s being crammed down our throats relentlessly at things we never needed them for and watch them screw things up.

Well they’re half doing the right thing, just collecting app analytics to train on now so they can properly do it later, seeding the open ecosystem with MLX, stuff like that.

But… I don’t know why they shoved it in news and some other places so early.

LLMs

Emphasis on the first L in LLM. Apple’s model is specifically designed to be small to work on phones with 8 gigs of ram (the requirement to run this)

The price gouging for RAM was only ever on computers. With phones you got what got, and you couldn’t pay for more.

Yeah… and it kinda sucks because it’s small.

If Apple shipped with 16GB/24GB like some Android phones did well before the iPhone 16, it would be far more useful. 16-24GB (aka 14B-32B class models) are the current threshold where quantized LLMs really start to feel ‘smart,’ and they could’ve continue trained a great Apache 2.0 model instead of a tiny, meager one from scratch.

I don’t know how much RAM is in my iPhone 14 Pro, but I’ve never thought ooh this is slow I need more RAM.

Perhaps, it’ll be an issue with this stupid Apple Intelligence, but I don’t care about using that on my next upgrade cycle.

My old Razer Phone 2 (circa 2019) shipped with 8GB RAM, and that (and the 120hz display) made it feel lighting fast until I replaced it last week, and only because the microphone got gunked up with dust.

Your iPhone 14 Pro has 6GB of RAM. Its a great phone (I just got a 16 plus on a deal), but that will significantly shorten its longevity.

I wonder how much more efficient the RAM can be when the manufacturer makes the software and the hardware? It has to help right, I don’t know what a 16 Pro feels like compared to this, but doubt I would notice.

Your OS uses it efficiently, but fundamentally it also limits what app developers can do. They have to make apps with 2-6GB in mind.

Not everything needs a lot of RAM, but LLMs are absolutely an edge case where “more is better, and there’s no way around it,” and they aren’t the only one.

What do you mean couldn’t pay for more? There are plenty of sub-$200 android phones with 8GB of RAM, and 12-16GB are fairly standard on flagships these days. Asus ROG Phone 6 is rather old and already came with 16GB what, three years ago?

It is definitely doable, there only needs to be willingness. Apple is definitely skimping here.

The iPhone has one ram option. If you buy an iPhone 16 your only option is 8 gigs.

Ah I see. I understood you meant that bigger RAM modules aren’t available in the industry so apple couldn’t add this even if they paid more, not that we as customers couldn’t spec a phone however we’d like. That makes sense now.

SSDs?

For RAG data? It works.

But its too slow for the weights. What generative models fundamentally do is run a full pass through the multi-gigabyte weights for every ‘word’ or diffusion step, so even 128-bit DDR5 like you find on desktop CPUs is too slow.

What I find surprising in the debate about AI and hallucinations is that everyone points the fact that’s it’s very dangerous and it will spread misinformation… But the problem is the inability or unwillingness to fact check our information.

Nobody wants to fact check something they saw on meta or tik tok. Nobody will. There is no difference between someone trusting some random influencer and someone trusting an AI. They are both set to fail the same way. Both lack critical thinking.

Instead of being afraid of AI and hallucinations we should be investing massively in teaching the newer generations on fact checking and critical thinking.

IA is a great assistant but only if you can fact check it. If you can’t or won’t then it’s a terrible assistant that will set you up to fail.

To be clear, I also struggle to fact check stuff and I definitely was misinformed many times in the past. Nobody is really immune to that problem. IMO IA doesn’t change much about that problem.

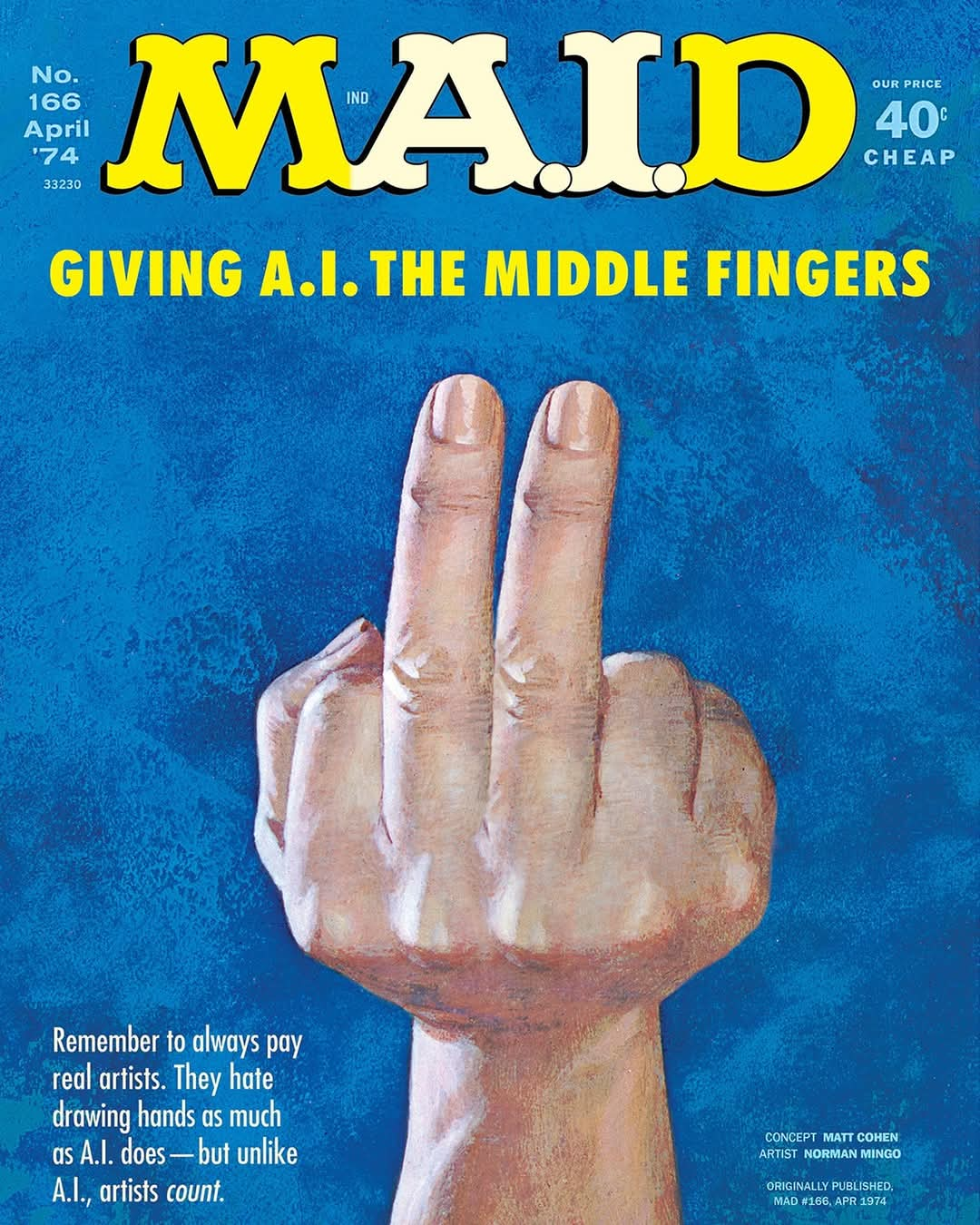

If you have to fact check everything it says, we’re better off as a species boiling the developers in dog shit.

“we’ve added a profoundly energy intensive feature that is wrong most of the time” okay, get in the vat.

get in the vat.

But you shouldn’t have to actively fact check every headline from the BBC because their headline doesn’t actually say what you read.

And there’s very little value to “summarizing messages” if you aren’t actually summarizing messages and the content doesn’t match the summary.

Yes, you should do more critical thinking, but lowering the quality of information of every interaction with the internet very clearly makes things worse.

What I find surprising is that so many people (i.e. you) still claim to fact check everything. You don’t. I guarantee it.

Most people don’t read news for a living. You can’t fact check everything you read online. That’s physically impossible. And if you’d be honest to yourself, 95% of headlines you read are just noise and you don’t read any further. Not because you’re too stupid, but because you’re not that interested in Trump’s latest shenanigans or Italy’s economic outlook.

You didn’t even read my entire comment…

Read it entirely and you will see that this aggressive tone wasn’t necessary or justified.

deleted by creator

This is true. Its baffling to me that so many people ‘trust’ influencers as much as they do.

If you have to fact check it every single time you use it, it’s completely fucking useless.

I generally make a sanity test by starting a new and ether use different words for the same request or tun it around, like trying to get my initial prompt by prompting the results I got in the first chat

AI-generated products can be a bad fit for news

No shit. The fact they only discovered that once they’ve got burned proves they never even questioned what generative AI does.

Though I’m sure half of the blame is from them asking it to tack the most clickbaity headlines on every article they can. Even human editors all but outright lie in those, of course an AI is going to hallucinate you the best title it can.

The article doesn’t explicitly state it, but the wording implies that this headline was not created by BBC. This appears to be a service running on Apple products producing its own summary of the news article. So the BBC didn’t get burned by something they did and that’s what they’re complaining about.

Oh, well that’s different. There is no reason Apple should be editorializing their content like that.

Apple wasn’t directly creating these summaries, it was their on device AI summaries of the articles bastardizing it.

That’s bullshit. You’re not absolved of all wrongdoing because you used a computer as a middle man.

Apple chose to implement AI for this purpose, they are responsible for all output.

That’s the BBC criticising Apple for indiscriminately mangling all notifications with AI, like news headlines. The BBC could boycott the Apple platform, but that’s basically their only lever to stop Apple doing this besides asking nicely.

I didn’t get that from the article but then, yeah, if it’s Apple rewriting BBC headlines like that, what the hell are they thinking.

They aren’t. They’re just putting AI on everything.

they didn’t. This is about a properly written headline by BBC being butchered when summarized by apple intelligence, which they have no control over.

claiming that Luigi Mangione […] had shot himself.

AI-generated content is prone to inaccuracies

Somebody would call that an “inaccuracy”?

If I serve you a pile of lukewarm shit on a plate and say here’s your dinner, would anybody call that an “inaccuracy”??

I say, life and death is even more than that.

Curse you, AI from 1974!

Ahh yes, the sales and marketing hype of AI continues while the public is still being fed this bullshit.

There are very few good use cases for AI, but sales people continue to peddle it for fucking everything.

Yesterday I was driving and my Android Auto asked if I wanted to enable AI to summarize my text messages. In what world is that a good idea? Text messages are already short. Why would I need a computer trying to make it shorter and potentially fucking up all the context?

There’s a famous quote attributed to Charles Babbage with regard to his difference engine (or some other calculation machine of his invention) which goes: “On two occasions I have been asked, ‘Pray, Mr. Babbage, if you put into the machine wrong figures, will the right answers come out?’ I am not able rightly to apprehend the kind of confusion of ideas that could provoke such a question.”

Apprehension is right, Mr. Babbage. You were lucky to find yourself talking to those who, in some unconscious way, suspected that something might be wrong in their thinking, leading them to at least enquire. There are those whose ideas are so confused, or even so completely lacking, that they will assume that no matter what is put into the machine, the right answers will come out.

graphene os looking more & more attractive every day

It’s great. Been using it for three or four years now. Never looked back.

“Intelligence”

LLMs are useful for a great deal of things, particularly offline translation without having to send data to Google’s servers. Sometimes I want to send a long message to friends and family but don’t want to write it in English, Polish, and Hindi.

But who thought using it for news headlines was a good idea?! Given the tens of thousands of news headlines published daily, some of them are statistically guaranteed to be falsely presented by AI.

E: not sure whether people are downvoting because they want Google to have their data, they don’t want people from different cultures talking to one another, or because they want AI-altered news stories.

People giving the “screw AI” downvote, while understandable, are just handing the world to corporate LLMs at the expense of locally runnable ones. Why do you think Altman, Google shareholders and such are pushing the LLM danger angle so hard?

What are the odds that all these stories about LLMs being terrible, and the crappy publicly available ones, are all just to convince us that they suck so nobody notices when actually good AI gets used?

I remember the very first thing that I have asked ChatGPT.

It was about a kind of shop, and where is the nearest one to me. It gave me a name and a nice description immediately. When I asked further about details, and the street address etc. it went rather vague. In the end it told me to ask Google for specifics.

When I checked Google to confirm, it turned out that this shop did not exist. No shop with that name, no similar one… It was all just made up.

Is it even possible for it to know that? It doesn’t have your location, does it?

I have told it the name of the city.

I’m not pretending that the LLMs we get aren’t terrible. Just wondering if there aren’t better AI being used by others more quietly.

No fear. These people are the opposite of “quiet”. They never invent anything without bragging. Even when it turns out as useless later.

If you honestly believe that, you’re incredibly naive. If anything, they wouldn’t want to share what they have with those they see as idiots.

“How To Shut Down A Discussion By Immediately Jumping To Personal Attacks For No Reason, by Ogmios”

Not much of a discussion when you’re just dismissing what I’ve said by presenting a completely fictional version of reality.

And you also apparently can’t read user names to see who said what.