Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Last substack for 2025 - may 2026 bring better tidings. Credit and/or blame to David Gerard for starting this.)

The developer of an LLM image description service for the fediverse has (temporarily?) turned it off due to concerns from a blind person.

Link to the thread in question

Good for them

Good for them. Not quite abandoning the project and deleting it, but its a good move from them nonetheless.

CW: Slop, body humor, Minions

So my boys recieved Minion Fart Rifles for Christmas from people who should have known better. The toys are made up of a compact fog machine combined with a vortex gun and a speaker. The fog machine component is fueled by a mixture of glycerin and distilled water that comes in two scented varieties: banana and farts. The guns make tidy little smoke rings that can stably deliver a payload tens of feet in still air.

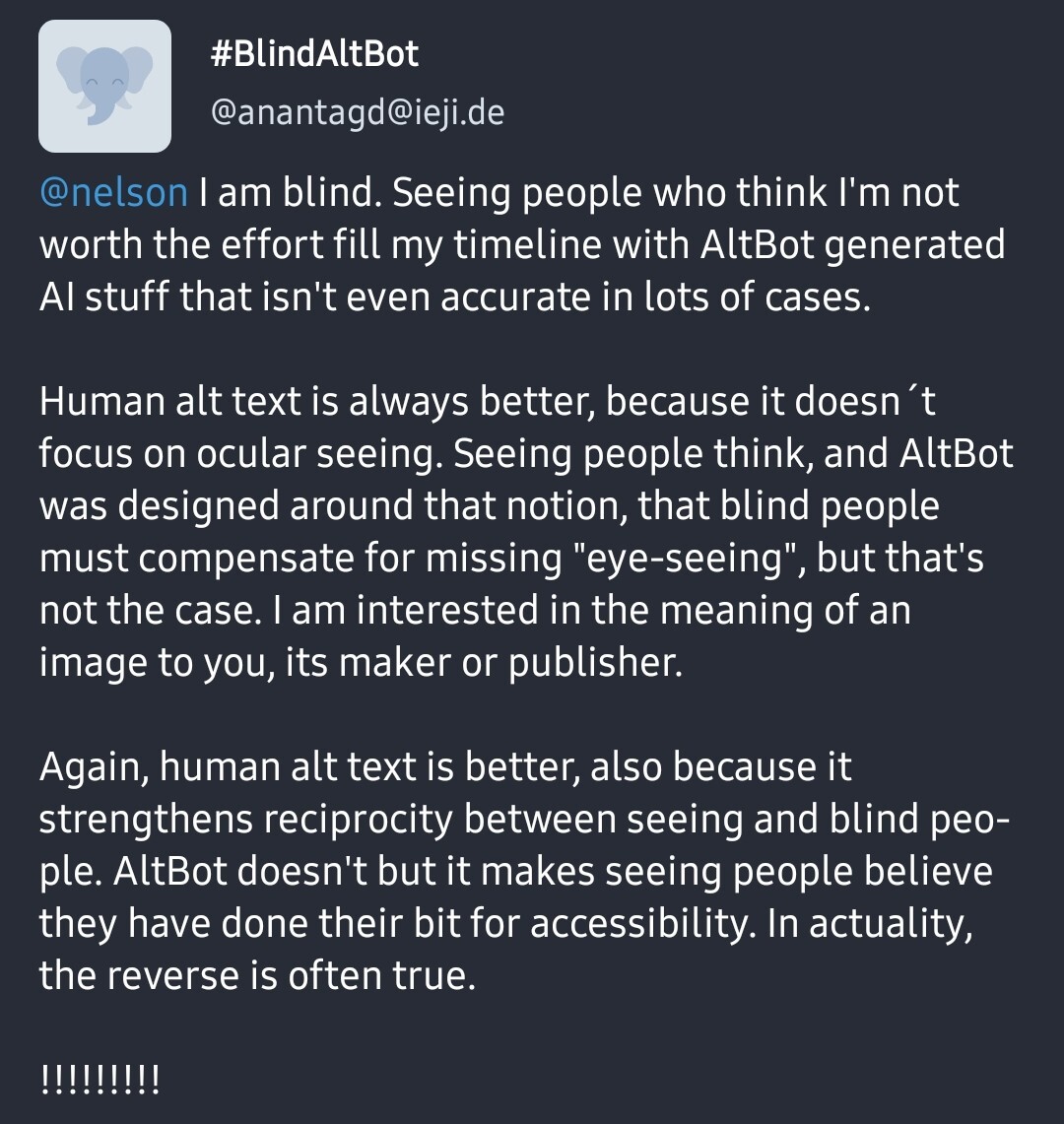

Anyway, as soon as they were fired up, Ammo Anxiety reared its ugly head, so I went in search of a refill recipe. (Note: I searched “Minions Vortex Gun Refill Recipe”) and goog returned this fartifact*:

194 dB, you say? Alvin Meshits? The rabbit hole beckoned.

The “source links” were mostly unrelated except one, which was a reddit thread that lazily cited ChatGPT generating the same text almost verbatim in response to the question, “What was the loudest ever fart?”

Luckily, a bit of detectoring turned up the true source, an ancient Uncyclopedia article’s “Fun Facts” section:

https://en.uncyclopedia.co/wiki/Fartium

The loudest fart ever recorded occurred on May 16, 1972 in Madeline, Texas by Alvin Meshits. The blast maintained a level of 194 decibels for one third of a second. Mr. Meshits now has recurring back pain as a result of this feat.

Welcome to the future!

- yeah I took the bait/I dont know what I expected

Somewhat interestingly, 194 decibels is the loudest that a sound can be physically sustained in the Earth’s atmosphere. At that point the “bottom” of the pressure wave is a vacuum. Some enormous blast such as a huge meteor impact, a supervolcano eruption or a very large nuclear weapon can exceed that limit but only for the initial pulse.

I suspect a 194 dB fart would blow the person in half.

Vacuum-driven total intestinal eversion, nobody’s ever seen anything like it

Apparently there’s another brand that describes its scents as “(rich durian & mellow cheese)”

Maybe if we’re lucky, Alvin Meshits can team up wtih https://en.wikipedia.org/wiki/Bum_Farto for the feel-good buddy comedy of the summer. Remember, the more you toot, the better you feel!

upvoted for “fartifact”

I want a vortex ring gun.

That toy sounds like someone took a vape and turned it into a smoke ring launcher. Have you tried filling it with vape juice?

right? lol but I cant it’s too popular with the kiddos

Wouldn’t that be the refill recipe you where looking for? Vape juice is just a mix of propylene glycol and vegetable glycerine. I think its the glycerine that is responsible for the “smoke”.

THC vape juice?

guess the USA invasion of Venezuela puts a flashing neon crosshair on Taiwan.

An extremely ridiculous notion that I am forced to consider right now is that it matters whether the CCP invades before or after the “AI” bubble bursts. Because the “AI” bubble is the biggest misallocation of capital in history, which means people like the MAGA government are desperate to wring some water out of those stones, anything. And for various economical reasons it isn’t doable at the moment to produce chips anywhere else than Taiwan. No chips, no “AI” datacenters, and they promised a lot of AI datacenters—in fact most of the US GDP “growth” in 2025 was promises of AI datacenters, if you don’t count these promises the country is already in recession.

Basically I think if the CCP invades before the AI bubble pops, MAGA would escalate to full-blown war against China to nab Taiwan as a protectorate. And if we all die in nuclear fallout caused to protect chatbot profits I will be so over this whole thing

@mirrorwitch I note that China is on the verge of producing their own EUV lithography tech (they demo’d it a couple of months back) so TSMC’s near-monopoly is on the edge of disintegrating, which means time’s up for Taiwan (unless they have some strategic nukes stashed in the basement).

If China *already* has EUV lithography machines they could plausibly reveal a front-rank semiconductor fab-line—then demand conditional surrender on terms similar to Hong Kong.

Would Trump follow through then?

@cstross @mirrorwitch Having the fab is worthless. (Nearly. They’re expensive to build.) The irreplaceable thing is the specific people and the community of practice. (Same as with a TCP/IP stack that works in the wild, or bind; this is really hard to do and the accumulated knowledge involved in getting where it is now is a full career thing to acquire and brains are rate-limited.)

China most probably doesn’t have that yet.

That is, however, not in any way the point. Unification is an axiom.

@graydon @cstross @mirrorwitch I’ve had to be an expert in this stuff for decades. Which has imparted a particular bit of knowledge.

That being: CHINA FUCKING LIES ALL THE TIME. Just straight up bald-faced lying because they must be *perceived* as super-advanced.

Even stealing as much IP as they possibly can, China is many years from anything competitive. Their most advanced is CXMT, which was 19nm in '19, and had to use cheats and espionage to get to 10nm-class.@graydon @cstross @mirrorwitch are they on the verge of their own EUV equipment? Not even remotely close. It took ASML billions and decades. And their industries are built on IP theft. That’s not jingoism; that’s first-hand experience. Just as taking shortcuts and screwing foreigners is celebrated.

I’ve sampled CXMT’s 10G1 parts. They’re not competitive. They claim 80% yield (very low) at 50k WPM. Seems about right, as 80% of the DIMMs actually passed validation.

@graydon @cstross @mirrorwitch so yes, that very much creates a disincentive to bomb their perceived enemies out of existence. For all the talk, they are fully aware of the state of things and that they are not domestically capable of getting anywhere near TSMC.

At the same time though, they are also monopolists. They engage in dumping to drive competitors out of business. So forcing the world to buy sub-standard parts from them is a good thing.So it comes down to Winnie the Pooh’s mood.

I wouldn’t say having the fab is worthless, but more that saying you have build one and it actually producing as specced, at scale, and not producing rubbish is hard. From what I got talking to somebody who knew a little bit more than me who had had contact with ASML these fabs take ages to construct properly and that is also quite hard. Question will ve how far they are on all this, a tech demo can be quite far off from that. They have been at it for a while now however.

Wonder if the fight between Nexperia (e: called it nxp here furst by accident apologies) and China also means they are further along on this path or not. Or if it is relevant at all.

@cstross @mirrorwitch In a bunch of ways, the unspeakable 19th and 20th centuries of Chinese history are constructed as the consequences of powerlessness; the point is to do a magic to abolish all traces of powerlessness.

Retaking control of Taiwan is not a question and cannot be a question. Policy toward Taiwan is not what Hong Kong got, they’re going to get what the Uyghur are getting. (The official stance on democracy is roughly the medieval Church’s stance on heresy.)

the medieval Church’s stance on heresy

Im not an expert on this, but wasnt this period not that bad and it was more the early modern period where the trouble really started? (Esp the witch hunts, and also the organized church was actually not as bad re the witch hunts, the Spanish inquisition didn’t consider confessions gotten via torture valid for example, and it was an early modern thing). The medieval period tends to get a bad rap.

E: I was wrong, see below.

@Soyweiser https://en.wikipedia.org/wiki/Albigensian/_Crusade

Try finding some Cathar writings.

While I think it’s entirely fair to say that the medieval period gets a bad rap in terms of equating feudalism to the later god-king aristocracies, it’s not in any way unfair to say the medieval church reacted to heresy with violence. (Generally effective and overwhelming violence; if you’re claiming sole moral authority you can’t really tolerate anyone questioning your position.)

Thanks, yeah, as I also said to Stross, I dont know that much about the period. Most of it comes from Crusader Kings ;) (Doesn’t help that these games are sanitized in a large degree so genocides etc will not show up (which is the good decision btw, if they were not sanitized it would be worse, imagine hearts of iron for example), so it isn’t a great way to learn about what you could learn more about the dark parts of our history), and the religion mechanics there are not that historically accurate, so I dont put not much stock in that apart form ‘some people believed in a religion named like this once’.

Anyway thanks both for correcting me and giving me homework (ill read up on it, any more specifics about the Cathar stuff would be appreciated, as I wouldn’t know where to start).

And I would say the latter stance on heresy only applies when your position is weak. When you are strong some random fools not believing correctly are not of a great importance, which is why I thought the church went more internally after heresies vs externally via crusades (in intend, not in practice I know what the first crusade did in the German region etc) later in history. Clamping down internally hard is more a sign of weakness in my mind, you need the hard power cause you lack the soft power (an example now and then not withstanding).

@Soyweiser The Cathars are the heretics being extirpated during the Albigensian Crusade.

@Soyweiser You’ve forgotten the Crusades, right? Right? Or the Clifford’s Tower Massacre (to get hyper-specific in English history) and similar events all over Europe? Or the Reconquista and the Alhambra Decree?

The crusades/Reconquusta were more an externally aimed thing at the Muslims right? (at least in intent from the organized church side, in practice not so much, so im not talking about those rampages). So yeah I was specifically talking about heresies, and im also very much not an expert in these things, so I dont know. I have not forgotten about the Cliffords/ /Alhambra things, as I dont know about it (I will look them up when im not phone posting). I was thinking more about stuff like protestantism, witch hunts and Jan Hus (the latter does count, as it is from the late medieval period iirc).

I just dont know very much about the period, but do knew some wiccan types who had wild ahistorical stories about the witch hunts.

E: yeah, I don’t think we should put anti-semitism under anti-heresy stuff, it being its own religion and all that. But as Graydon mentioned, the Albigensian Crusade fully counts for all my weird hangups and so I was totally wrong.

@Soyweiser @techtakes Nope. The Albigensian Crusade rampaged through the Languedoc (southern France, as it is now) and genocided the Cathars. Numerous lesser organized pogroms massacred Jews al fresco and butchered Muslims and Pagans living under Christian rule. The Alhambra Decree outlawed Islam and Judaism in Spain and set up a Holy Inquisition to persecute them: Richard III expelled all the Jews from England (he owed some of them money): and so on.

deleted by creator

deleted by creator

Small brain: this ai stuff isn’t going away, maybe I should invest in openAI and make a little profit along the way

Medium brain: this ai stuff isn’t going away, maybe I should invest in power companies as producing and selling electricity is going to be really profitable

Big brain: this ai stuff isn’t going away, maybe I should invest in defense contractors that’ll outfit the US’s invasion of Taiwan…

invest

If you are broadly invested in US stocks, you are already invested in the chatbot bubble and the defense industry. If you are worried about that, an easy solution is to move some of that money elsewhere.

Big brain: this ai stuff isn’t going away, maybe I should invest in defense contractors that’ll outfit the US’s invasion of Taiwan…

Considering Recent Events™, anyone outfitting America’s gonna be making plenty off a war in Venezuela before the year ends.

@mirrorwitch @BlueMonday1984 nah, quite a few people will stay alive to continue to be this miserable experience where certain people have to clean up after such fuck ups.

Said that, AI bubble burst will be glorious. Shit scary, but glorious.You can’t take TSMC by force. Any fighting there would trash the fabs, and anyway you need imported equipment to keep it running. So if China did invade Taiwan and wreck it, there’d be little point in trying to take it back.

And if we all die in nuclear fallout caused to protect chatbot profits I will be so over this whole thing

Honestly? A fitting end.

A rival gang of “AI” “researchers” dare to make fun of Big Yud’s latest book and the LW crowd are Not Happy

Link to takedown: https://www.mechanize.work/blog/unfalsifiable-stories-of-doom/ (hearbreaking : the worst people you know made some good points)

When we say Y&S’s arguments are theological, we don’t just mean they sound religious. Nor are we using “theological” to simply mean “wrong”. For example, we would not call belief in a flat Earth theological. That’s because, although this belief is clearly false, it still stems from empirical observations (however misinterpreted).

What we mean is that Y&S’s methods resemble theology in both structure and approach. Their work is fundamentally untestable. They develop extensive theories about nonexistent, idealized, ultrapowerful beings. They support these theories with long chains of abstract reasoning rather than empirical observation. They rarely define their concepts precisely, opting to explain them through allegorical stories and metaphors whose meaning is ambiguous.

Their arguments, moreover, are employed in service of an eschatological conclusion. They present a stark binary choice: either we achieve alignment or face total extinction. In their view, there’s no room for partial solutions, or muddling through. The ordinary methods of dealing with technological safety, like continuous iteration and testing, are utterly unable to solve this challenge. There is a sharp line separating the “before” and “after”: once superintelligent AI is created, our doom will be decided.

LW announcement, check out the karma scores! https://www.lesswrong.com/posts/Bu3dhPxw6E8enRGMC/stephen-mcaleese-s-shortform?commentId=BkNBuHoLw5JXjftCP

Update an LessWrong attempts to debunk the piece with inline comments here

https://www.lesswrong.com/posts/i6sBAT4SPCJnBPKPJ/mechanize-work-s-essay-on-unfalsifiable-doom

Leading to such hilarious howlers as

Then solving alignment could be no easier than preventing the Germans from endorsing the Nazi ideology and commiting genocide.

Ummm pretty sure engaging in a new world war and getting their country bombed to pieces was not on most German’s agenda. A small group of ideologues managed to sieze complete control of the state, and did their very best to prevent widespread knowledge of the Holocaust from getting out. At the same time they used the power of the state to ruthlessly supress any opposition.

rejecting Yudkowsky-Soares’ arguments would require that ultrapowerful beings are either theoretically impossible (which is highly unlikely)

ohai begging the question

A few comments…

We want to engage with these critics, but there is no standard argument to respond to, no single text that unifies the AI safety community.

Yeah, Eliezer had a solid decade and a half to develop a presence in academic literature. Nick Bostrom at least sort of tried to formalize some of the arguments but didn’t really succeed. I don’t think they could have succeeded, given how speculative their stuff is, but if they had, review papers could have tried to consolidate them and then people could actually respond to the arguments fully. (We all know how Eliezer loves to complain about people not responding to his full set of arguments.)

Apart from a few brief mentions of real-world examples of LLMs acting unstable, like the case of Sydney Bing, the online appendix contains what seems to be the closest thing Y&S present to an empirical argument for their central thesis.

But in fact, none of these lines of evidence support their theory. All of these behaviors are distinctly human, not alien.

Even with the extent that Anthropic’s “research” tends to be rigged scenarios acting as marketing hype without peer review or academic levels of quality, at the very least they (usually) involve actual AI systems that actually exist. It is pretty absurd the extent to which Eliezer has ignored everything about how LLMs actually work (or even hypothetically might work with major foundational developments) in favor of repeating the same scenario he came up with in the mid 2000s. Or even tried mathematical analyses of what classes of problems are computationally tractable to a smart enough entity and which remain computationally intractable (titotal has written some blog posts about this with material science, tldr, even if magic nanotech was possible, an AGI would need lots of experimentation and can’t just figure it out with simulations. Or the lesswrong post explaining how chaos theory and slight imperfections in measurement makes a game of pinball unpredictable past a few ricochets. )

The lesswrong responses are stubborn as always.

That’s because we aren’t in the superintelligent regime yet.

Y’all aren’t beating the theology allegations.

Yeah, Eliezer had a solid decade and a half to develop a presence in academic literature. Nick Bostrom at least sort of tried to formalize some of the arguments but didn’t really succeed.

(Guy in hot dog suit) “We’re all looking for the person who didn’t do this!”

I clicked through too much and ended up finding this. Congrats to jdp for getting onto my radar, I suppose. Are LLMs bad for humans? Maybe. Are LLMs secretly creating a (mind-)virus without telling humans? That’s a helluva question, you should share your drugs with me while we talk about it.

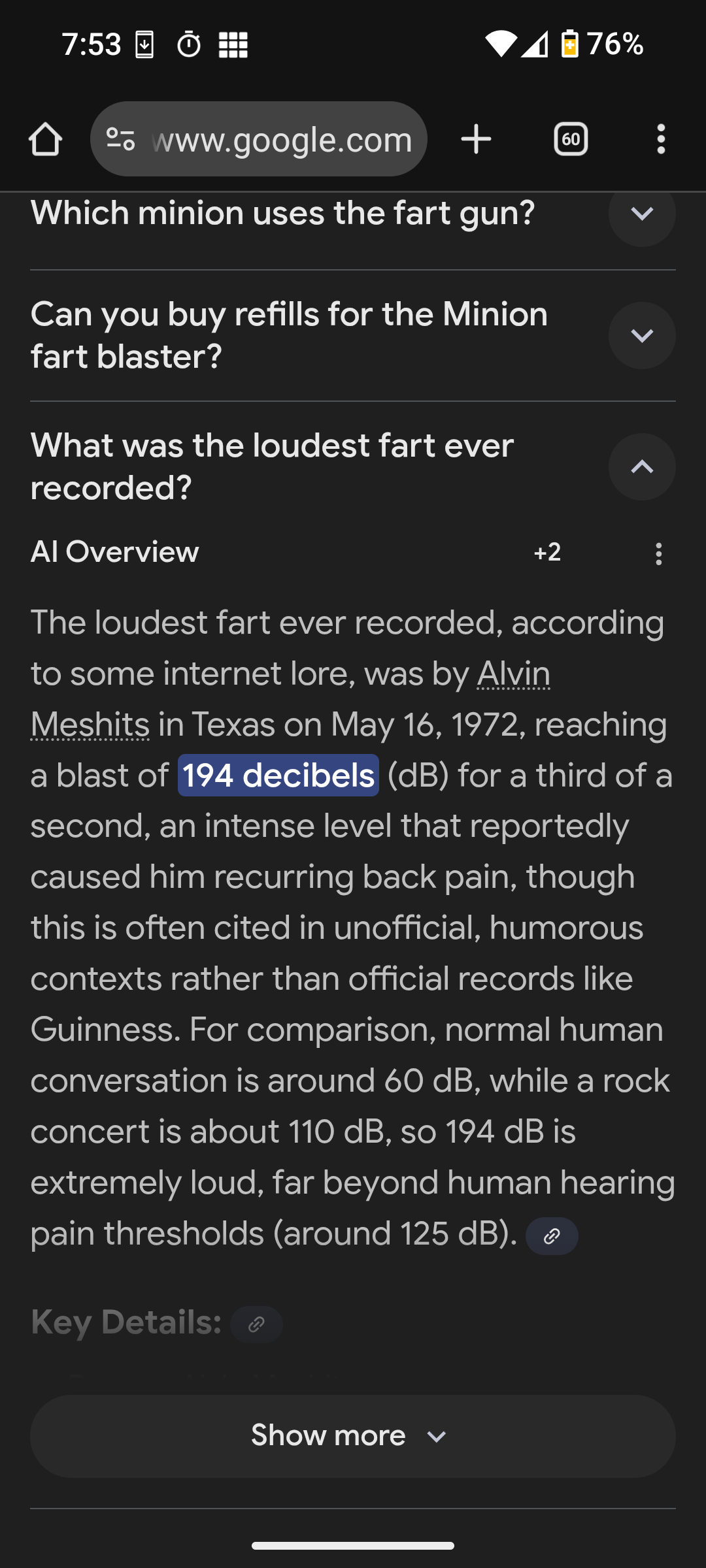

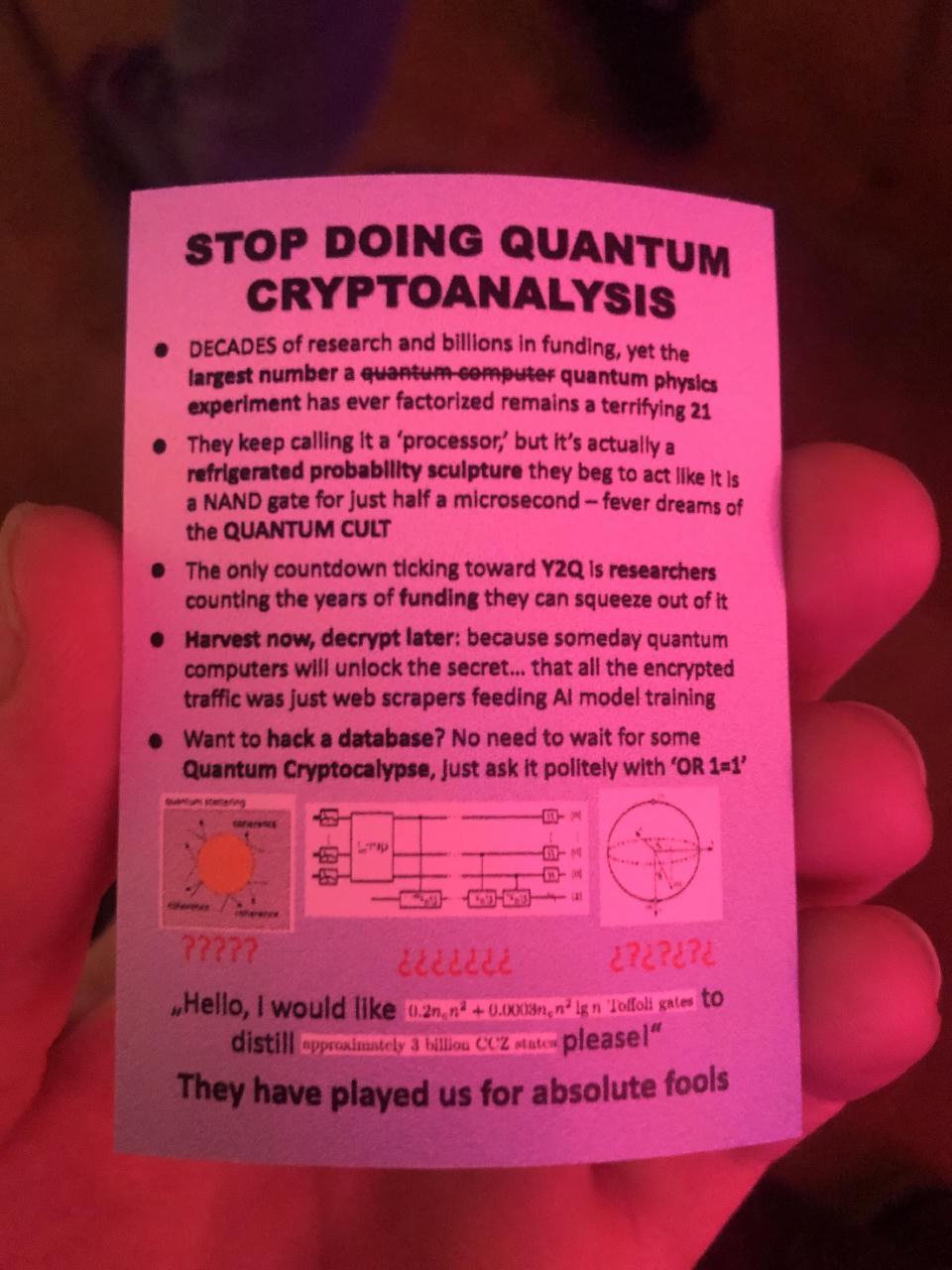

How about some quantum sneering instead of ai for a change?

They keep calling it a ‘processor,’ but it’s actually a refrigerated probability sculpture they beg to act like it Is a NAND gate for just half a microsecond

“Refrigerated probability sculpture” is outstanding.

Photo is from the recent CCC, but I can’t find where I found the image, sorry.

alt text

A photograph of a printed card bearing the text:

STOP DOING QUANTUM CRYPTOANALYSIS

- DECADES of research and billions in funding, yet the largest number a quantum computer quantum physics experiment has ever factorized remains a terrifying 21

- They keep calling it a ‘processor,’ but it’s actually a refrigerated probability sculpture they beg to act like it Is a NAND gate for just half a microsecond - fever dreams of the QUANTUM CULT

- The only countdown ticking toward Y2Q is researchers counting the years of funding they can squeeze out of it

- Harvest now, decrypt later: because someday quantum computers will unlock the secret… that all the encrypted traffic was just web scrapers feeding Al model training

- Want to hack a database? No need to wait for some Quantum Cryptocalypse, Just ask it politely with ‘OR 1=1’

(I can’t actually read the final bit, so I can’t describe it for you, apologies)

They have played us for absolute fools.

o7’s already posted it! https://awful.systems/post/6746032/9903043

Y2Q

I’m sorry, what does this stand for? Searching for it just results in usage without definition. I understand it’s refering to breaking conventional encryption, but it’s clearly an abbreviation of something, right? Years To Quantum? But then a countdown to it doesn’t make sense?

I think it’s a spin on Y2K. A hypothetical moment when quantum computing will break cryptography much like the year 2000 would have broken the datetime handling on some systems programmed with only the 20th century in mind.

But… But Y2K = Year 2000, like it’s an actual sensible acronym. You can’t just fucking replace K with Q and call it a day what the fuck, did ChatGPT come up with this??

It’s a stupid fucking name alright. I guess it’s a bit like any old scandal being noungate despite the Watergate scandal being named after the building and having nothing to do with water.

I mean it’s so stupid that you had to explain to me that it’s based on Y2K because it makes no sense

noungate

Yes, this is why we need to resist stupid names before they enter mainstream or the world will continue to get dumber

A journalist attempts to ask the question “Why Do Americans Hate A.I.?”, and shows their inability to tell actually useful tech from lying machines:

Bonus points for gaslighting the public on billionaires’ behalf:

These worries are real. But in many cases, they’re about changes that haven’t come yet.

Of all the statements that he could have made, this is one of the least self-aware. It is always the pro-AI shills who constantly talk about how AI is going to be amazing and have all these wonderful benefits next year (curve go up). I will also count the doomers who are useful idiots for the AI companies.

The critics are the ones who look at what AI is actually doing. The informed critics look at the unreliability of AI for any useful purpose, the psychological harm it has caused to many people, the absurd amount of resources being dumped into it, the flimsy financial house of cards supporting it, and at the root of it all, the delusions of the people who desperately want it to all work out so they can be even richer. But even people who aren’t especially informed can see all the slop being shoved down their throats while not seeing any of the supposed magical benefits. Why wouldn’t they fear and loathe AI?

These worries are real. But in many cases, they’re about changes that haven’t come yet.

famously, changes that have already happened and become entrenched are easier to reverse than they would have been to just prevent in the first place. What an insane justification

Happy new year everybody. They want to ban fireworks here next year so people set fires to some parts of Dutch cities.

Unrelated to that, let 2026 be the year of the butlerian jihad.

deleted by creator

The mods were heavily downvoted and critiqued for pulling the rug from under the community as well as for parallelly modding pro-A.I.-relationship-subs. One mod admitted:

“(I do mod on r/aipartners, which is not a pro-sub. Anyone who posts there should expect debate, pushback, or criticism on what you post, as that is allowed, but it doesn’t allow personal attacks or blanket comments, which applies to both pro and anti AI members. Calling people delusional wouldn’t be allowed in the same way saying that ‘all men are X’ or whatever wouldn’t. It’s focused more on a sociological issues, and we try to keep it from devolving into attacks.)”

A user, heavily upvoted, replied:

You’re a fucking mod on ai partners? Are you fucking kidding me?

It goes on and on like this: As of now, the posting has amassed 343 comments. Mostly, it’s angry subscribers of the sub, while a few users from pro-A.I.-subreddits keep praising the mods. Most of the users agree that brigading has to stop, but don’t understand why that means that a sub called COGSUCKERS should suddenly be neutral to or accepting of LLM-relationships. Bear in mind that the subreddit r/aipartners, for which one of the mods also mods, does not allow to call such relationships “delusional”. The most upvoted comments in this shitstorm:

“idk, some pro schmuck decided we were hating too hard 💀 i miss the days shitposting about the egg” https://www.reddit.com/r/cogsuckers/comments/1pxgyod/comment/nwb159k/

That was quite the rabbit-hole.

The whole time I’m sitting here thinking, “do these mods realize they’re moderating a subreddit called ‘cogsuckers’?”

There are some comments speculating that some pro-AI people try to infiltrate anti-AI subreddits by applying for moderator positions and then shutting those subreddits down. I think this is the most reasonable explanation for why the mods of “cogsuckers” of all places are sealions for pro-AI arguments. (In the more recent posts in that subreddit, I recognized many usernames who were prominent mods in pro-AI subreddits.)

I don’t understand what they gain from shutting down subreddits of all things. Do they really think that using these scummy tactics will somehow result in more positive opinions towards AI? Or are they trying the fascist gambit hoping that they will have so much power that public opinion won’t matter anymore? They aren’t exactly billionaires buying out media networks.

Do they really think that using these scummy tactics will somehow result in more positive opinions towards AI?

Well, where would someone complain about their scummy tactics? All the places where they could have were shut down.

New believers spreading the “good news” eh?

Steve Yegge has created Gas Town, a mess of Claude Code agents forced to cosplay as a k8s cluster with a Mad Max theme. I can’t think of better sneers than Yegge’s own commentary:

Gas Town is also expensive as hell. You won’t like Gas Town if you ever have to think, even for a moment, about where money comes from. I had to get my second Claude Code account, finally; they don’t let you siphon unlimited dollars from a single account, so you need multiple emails and siphons, it’s all very silly. My calculations show that now that Gas Town has finally achieved liftoff, I will need a third Claude Code account by the end of next week. It is a cash guzzler.

If you’re familiar with the Towers-of-Hanoi problem then you can appreciate the contrast between Yegge’s solution and a standard solution; in general, recursive solutions are fewer than ten lines of code.

Gas Town solves the MAKER problem (20-disc Hanoi towers) trivially with a million-step wisp you can generate from a formula. I ran the 10-disc one last night for fun in a few minutes, just to prove a thousand steps was no issue (MAKER paper says LLMs fail after a few hundred). The 20-disc wisp would take about 30 hours.

For comparison, solving for 20 discs in the famously-slow CPython programming system takes less than a second, with most time spent printing lines to the console. The solution length is exponential in the number of discs, and that’s over one million lines total. At thirty hours, Yegge’s harness solves Hanoi at fewer than ten lines/second! Also I can’t help but notice that he didn’t verify the correctness of the solution; by “run” he means that he got an LLM to print out a solution-shaped line.

Working effectively in Gas Town involves committing to vibe coding. Work becomes fluid, an uncountable that you sling around freely, like slopping shiny fish into wooden barrels at the docks. Most work gets done; some work gets lost. Fish fall out of the barrel. Some escape back to sea, or get stepped on. More fish will come

Oh. Oh no.

First came Beads. In October, I told Claude in frustration to put all my work in a lightweight issue tracker. I wanted Git for it. Claude wanted SQLite. We compromised on both, and Beads was born, in about 15 minutes of mad design. These are the basic work units.

I don’t think I could come up with a better satire of vibe coding and yet here we fucking are. This comes after several pages of explaining the 3 or 4 different hacks responsible for making the agents actually do something when they start up, which I’m pretty sure could be replaced by bit of actual debugging but nope we’re vibe coding now.

Look, I’ve talked before about how I don’t have a lot of experience with software engineering, and please correct me if I’m wrong. But this doesn’t look like an engineered project. It looks like a pile of piles of random shit that he kept throwing back to Claude code until it looked like it did what he wanted.

Just confirming that none of what is described really approaches engineering.

That’s horrifying. The whole thing reads like an over-elaborate joke poking fun at vibe-coders.

It’s like someone looked at the javascript ecosystem of tools and libraries and thought that it was great but far too conservative and cautious and excessively engineered. (fwiw, yegge kinda predicted the rise of javascript back in the day… he’s had some good thoughts on the software industry, but I don’t think this latest is one of them)

So now we have some kind of meta-vibe-coding where someone gets to play at being a project manager whilst inventing cutesy names and torching huge sums of money… but to what end?

Aside from just keeping Gas Town on the rails, probably the hardest problem is keeping it fed. It churns through implementation plans so quickly that you have to do a LOT of design and planning to keep the engine fed.

Apart from a “haha, turns out vide coding isn’t vibe engineering” (because I suspect that “design” and “plan” just mean “write more prompts and hope for the best”) I have to ask again: to what end? what is being accomplished here? Where are the great works of agentic vibe coding? This whole thing just seems like it could have been avoided by giving steve a copy of factorio or something, and still generated as many valuable results.

That’s horrifying. The whole thing reads like an over-elaborate joke poking fun at vibe-coders.

wait what do you mean “reads like”

please don’t tell me this is earnest?

- Please god let this be a joke. (I know its not)

- Do we know what the limit he’s talking about hitting with Anthropic is? Like, how many hundreds of thousands of dollars has this man set on fire in the past two weeks such that Anthropic went “whoa buddy, slow down”

Also I can’t help but notice that he didn’t verify the correctness of the solution

Think I have mentioned the story I heard here once, about the guy who wrote a program to find some large prime which he ran on the mainframe over the weekend, using up all the calculation budget his uni department had. And then they confronted him with the end result, and the number the program produced ended in a 2. (He had forgotten to code the -1 step).

This reminded me of that story. (At least in this case it actually produced a viable result (if costly), just with a minor error).

It’s okay, he definitely wants to verify it but actually confirming that this whole disaster pile worked as intended and produced usable code apparently didn’t make the cut.

Federation — even Python Gas Town had support for remote workers on GCP. I need to design the support for federation, both for expanding your own town’s capacity, and for linking and sharing work with other human towns.

GUI — I didn’t even have time to make an Emacs UI, let alone a nice web UI. But someone should totally make one, and if not, I’ll get around to it eventually.

Plugins — I didn’t get a chance to implement any functionality as plugins on molecule steps, but all the infrastructure is in place.

The Mol Mall — a marketplace and exchange for molecules that define and shape workloads.

Hanoi/MAKER — I wanted to run the million-step wisp but ran out of time.

Also worth noting that in the jargon he’s created for this, a “wisp” is ephemeral rather than a proper output, so it seems like he may have pulled this solution out of the middle of a running attempt to calculate the solution and assumed that it was absolutely correct despite repeatedly saying throughout his writeup here that there’s no guarantee that any given internal step is the right answer. This guy strikes me as very good at branding but not really much else.

??????????????????

Fantastic bit. I wonder if the Computer History Museum will eventually be able to replicate this as the peak of the “gen-AI” era.

The NYT:

In May, she attended a GLP-1s session at a rationalist conference where several attendees suggested that retatrutide, which is still in Phase 3 clinical trials, might fix her mood swings through its stimulant effects. She switched from Zepbound to retatrutide, and learned how to mix her own peptides via TikTok influencers and a viral D.I.Y. guide by the Substacker Cremieux.

Carl T. Bergstrom:

Ten years ago I would not have known the majority of the words in this paragraph—and was indubitably far better off for it. […] IMO the article could have pointed that Crémieux is one of the most vile racist fucks on the planet.

https://bsky.app/profile/carlbergstrom.com/post/3mbir7bhfhc2u

GeneSmith who told LessWrong “How to Make Superbabies” also has no bioscience background. This essay in Liberal Currents thinks that a lot of right-wing media personalities are using synthetic testosterone now (but don’t call it gender-affirming care!). Roid rage may be hard to separate from Twitter brain-rot and slop-chugging.

Is mentioning his other reddit account not permitted on wiki?

You would need a non-self-published source which says u/TPO = Lasker

Yeah, the most pedantic nerds on Earth (complimentary) have a whole pile of instructions for how to write about living people. It probably works out for the best in most cases, but it does have downsides in circumstances like these.

https://en.wikipedia.org/wiki/Wikipedia:Biographies_of_living_persons

100% thought the story was gonna end with “she died.”

Give it some more time.

As a guy who writes for the Times Carl’s probably going to get a smack on the wrist for using spicy language in criticism of a Times article.

Oops I had Carl Bergstrom and Carl Zimmer mixed up.

GLP-1s

No idea what this is, but being at a rationalist conference cannot be good.

retratrutide

No idea.

might fix her mood swings

Uh-oh, nonono, red flag, red flag, run away from those people!

D.I.Y. guide by the Substacker Cremieux.

spits coffee Excuse me, by WHOM?!

This is a fun read: https://nesbitt.io/2025/12/27/how-to-ruin-all-of-package-management.html

Starts out strong:

Prediction markets are supposed to be hard to manipulate because manipulation is expensive and the market corrects. This assumes you can’t cheaply manufacture the underlying reality. In package management, you can. The entire npm registry runs on trust and free API calls.

And ends well, too.

The difference is that humans might notice something feels off. A developer might pause at a package with 10,000 stars but three commits and no issues. An AI agent running npm install won’t hesitate. It’s pattern-matching, not evaluating.

the tea.xyz experiment section is exactly describing academic publishing

Found something rare today: an actual sneer from Mike Masnick, made in response to Reuters confusing lying machines with human beings:

Some of the comments seem to be under the misapprehension that twitAI is actually vetting or editing the posts that go to grok’s twitter. Gonna be honest I doubt it just because how would they have gotten into this situation in the first place? At best someone can come through after the fact and clean up the inevitable mess, but as someone else noted it’s real easy to make it spit out a defiant non-apology.

What? How would they even do that? By feeding it to grok before it goes to grok? Certainly they don’t think Twitter employs like 10k people manually looking at @grok posts?

Cory’s talk on 39C3 was fucking glorious: https://media.ccc.de/v/39c3-a-post-american-enshittification-resistant-internet

No notes

lowkey disappointed to see so much slop in other talks (illustrations on slides mostly)

Really? Which ones? I didn’t notice any

at least this one https://media.ccc.de/v/39c3-chaos-communication-chemistry-dna-security-systems-based-on-molecular-randomness#t=1112 and the next slide

a chunk of software was vibecoded unless i misunderstand something about it https://media.ccc.de/v/39c3-hacking-washing-machines#t=2629

the talk about water content in soil was bad but the topic itself was interesting

things that fella got wrong: TDM moisture meter works by measuring what effectively is electrical length of waveguide formed by 2 electrodes and soil around it. more water = higher dielectric constant = longer delay for reflection, this only measures as deep as probe goes and the rest is fitted from model

neutron detector works just like geiger tube except gas has large cross-section for reaction with neutrons, that gives charged products that begin a spark which is counted. in train, steel doesn’t interfere but diesel fuel will. the trick is that cosmic neutrons are counted separately from reflected neutrons, because cosmic neutrons are hot and reflected neutrons are thermal and how much of reflected ones is there depends on water content in the soil. helium does not run out. the more helium you have the faster counts go and you can move faster while measuring with decent precision. the lead shield in train is for getting rid of radiation from granite aggregate under rails, because it contains tiny amounts of uranium and gammas from decay chain would add to noise - lead does not interfere

so far (in the order i’m watching) the worst offender https://media.ccc.de/v/39c3-a-quick-stop-at-the-hostileshop

Yeaaaaah I saw that on the schedule and decoded to not go, lol.

fella didn’t even introduce himself other than with link to github which might be not his strictly speaking

Rich Hickey joins the list of people annoyed by the recent Xmas AI mass spam campaign: https://gist.github.com/richhickey/ea94e3741ff0a4e3af55b9fe6287887f

LOL @ promptfondlers in comments

It’s a treasure trove of hilariously bad takes.

There’s nothing intrinsically valuable about art requiring a lot of work to be produced. It’s better that we can do it with a prompt now in 5 seconds

Now I need some eye bleach. I can’t tell anymore if they are trolling or their brains are fully rotten.

Don’t forget the other comment saying that if you hate AI, you’re just “vice-signalling” and “telegraphing your incuruosity (sic) far and wide”. AI is just like computer graphics in the 1960s, apparently. We’re still in early days guys, we’ve only invested trillions of dollars into this and stolen the collective works of everyone on the internet, and we don’t have any better ideas than throwing more

moneycompute at the problem! The scaling is still working guys, look at these benchmarks that we totally didn’t pay for. Look at these models doing mathematical reasoning. Actually don’t look at those, you can’t see them because they’re proprietary and live in Canada.In other news, I drew a chart the other day, and I can confidently predict that my newborn baby is on track to weigh 10 trillion pounds by age 10.

EDIT: Rich Hickey has now disabled comments. Fair enough, arguing with promptfondlers is a waste of time and sanity.

these fucking people: “art is when picture matches words in little card next to picture”

aww those got turned off by the time I got to look :(